Nimble MCP Server is available on the Databricks Marketplace as a one-click install. It creates a secure Unity Catalog connection that gives any Databricks agent access to Nimble’s full web data platform — search, extract, map, crawl, and structured data extraction.Documentation Index

Fetch the complete documentation index at: https://docs.nimbleway.com/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

- A Databricks workspace with the Managed MCP Servers preview enabled (manage previews)

CREATE CONNECTIONprivilege on the Unity Catalog metastore- A Nimble API key — sign up and generate one from Account Settings > API Keys

Install from Databricks Marketplace

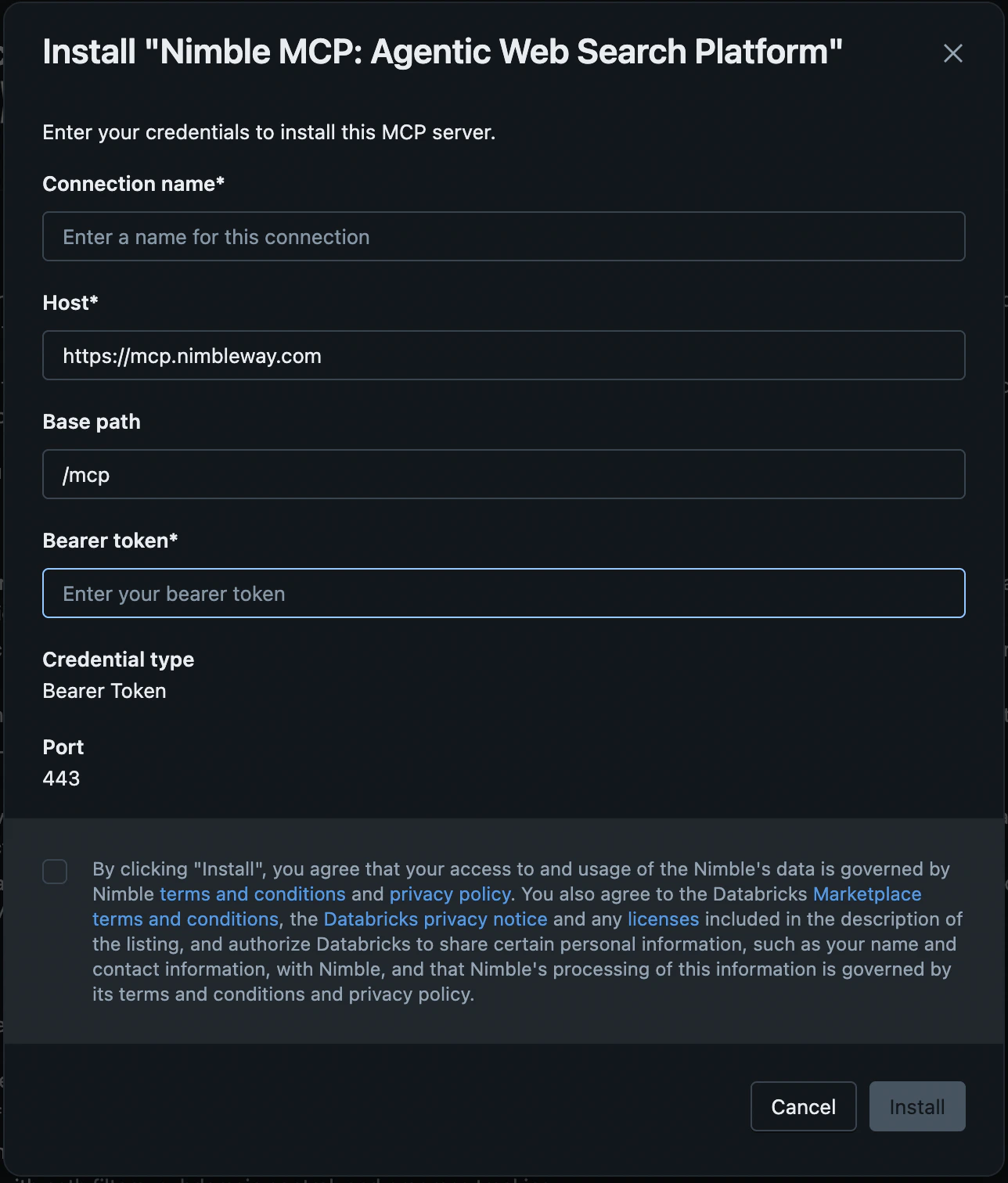

Install and configure the connection

Click Install. In the installation dialog, configure:

| Field | Value |

|---|---|

| Connection name | A name for the Unity Catalog connection (default: nimble-mcp-marketplace) |

| Host | Pre-populated |

| Base path | Pre-populated |

| Bearer token | Your Nimble API key |

Share the Connection

Grant access so team members can use the Nimble MCP server:- Go to Catalog > Connections and click the Nimble connection.

- Open the Permissions tab and grant USE CONNECTION to the principals that need access.

Test in Databricks

AI Playground

Add Nimble MCP tools

Click Tools > + Add tool > MCP Servers > External MCP servers and select the

nimble-mcp-marketplace connection.Databricks Assistant

- Open Databricks Assistant and click the Settings icon.

- Under MCP Servers, click + Add MCP Server > External MCP servers and select the

nimble-mcp-marketplaceconnection.

Use in Agent Code

Verify the connection

UseDatabricksMCPClient to list available tools through the Databricks-managed proxy.

DatabricksMCPClient.list_tools() calls asyncio.run() internally. Databricks notebooks already have a running event loop, so nest_asyncio.apply() is required to avoid a RuntimeError.Build a LangGraph agent

Connect a Databricks-hosted LLM to Nimble tools usingMultiServerMCPClient and LangGraph.

Call tools directly

Skip the agent framework and call Nimble tools directly through the MCP client.Required packages

Sample Notebook

A complete walkthrough with four use cases (web search, page extraction, competitive pricing research, and site mapping) is available in the Nimble cookbook:Nimble MCP + Databricks Notebook

End-to-end notebook: install packages, verify connection, build a LangGraph agent, and run queries

Resources

Databricks Marketplace Listing

Install Nimble MCP Server directly from the Marketplace

Nimble MCP Server Docs

Full MCP Server setup for Claude, Cursor, and other clients

Databricks External MCP Docs

Databricks documentation for external MCP server connections

Nimble Studio

Create Web Search Agents visually — no coding required