Batch Processing

When collecting data on a large number of products, a batch request can help streamline the process into a more convenient workflow. Instead of launching a separate request for each product, a batch request allows you to include up to 1,000 URLs in a single request, and have the results delivered to your cloud storage solution.

In the following examples, we'll demonstrate real-world use cases, and show how to use batch requests to most efficiently achieve the intended goal.

Example one - collecting data for multiple products

In this first example, we'll collect data for multiple unique products. To do so, we set the URLs of the products we want to collect in the url fields of the requests object.

curl -X POST 'https://api.webit.live/api/v1/batch/ecommerce' \

--header 'Authorization: Basic <credential string>' \

--header 'Content-Type: application/json' \

--data-raw '{

"requests": [

{ "url": "https://www.walmart.com/ip/Product-One...." },

{ "url": "https://www.walmart.com/ip/Product-Two...." },

{ "url": "https://www.walmart.com/ip/Product-Three...." }

],

"vendor": "walmart",

"storage_type": "s3",

"storage_url": "s3://Your.Repository.Path/",

"callback_url": "https://your.callback.url/path"

}'import requests

url = 'https://api.webit.live/api/v1/batch/ecommerce'

headers = {

'Authorization': 'Basic <credential string>',

'Content-Type': 'application/json'

}

data = {

"requests": [

{ "url": "https://www.walmart.com/ip/Product-One...." },

{ "url": "https://www.walmart.com/ip/Product-Two...." },

{ "url": "https://www.walmart.com/ip/Product-Three...." }

],

"vendor": "walmart",

"storage_type": "s3",

"storage_url": "s3://Your.Repository.Path/",

"callback_url": "https://your.callback.url/path"

}

response = requests.post(url, headers=headers, json=data)

print(response.status_code)

print(response.json())

Parameters that are placed outside the requests object, such as vendor, storage_type, storage_url, and callback_url , are automatically applied as defaults to all defined requests.

If a parameter is set both inside and outside the requests object, the value inside the request overrides the one outside.

Example two - collecting products from multiple vendors

In the next example, we'll collect products from multiple vendors. To do so, we'll take advantage of the requests object, which allows us to set any parameter inside each request:

In the above example, we've set Walmart as our desired vendor for the first two requests. On the third request, we haven't set a vendor implicitly, and so the default vendor defined outside the requests object is used instead, which in this case is Amazon.

Example three - collecting the same product from multiple countries

Any parameter can be defined inside and/or outside the requests object. We can take advantage of this in some cases by setting our URL once as a default and setting various other parameters in the requests object. For example:

In the above example, three total requests would be made for the same Walmart product - each time from a different country and locale.

Request Options

Batch requests use the same parameters as asynchronous requests, with the exception of the requests object.

requests

Optional

Allows for defining custom parameters for each request within the bulk. Any of the parameters below can be used in an individual request.

vendor

Required

String walmart, amazon

url

Required

The product or search pages to collect.

country

Optional (default = all)

String Country used to access the target URL, use ISO Alpha-2 Country Codes i.e. US, DE, GB

state

Optional

String | For targeting US states (does not include regions or territories in other countries). Two-letter state code, e.g. NY, IL, etc.

city

Optional

String | For targeting large cities and metro areas around the globe. When targeting major US cities, you must include state as well. Click here for a list of available cities.

zip

optional

String A 5-digit US zip code. If provided, the closest store to the provided zip code is selected.

locale

Optional (default = en)

String | LCID standard locale used for the URL request. Alternatively, user can use auto for automatic locale based on country targeting.

parse

Optional (default = false)

Enum: true | false Instructs Nimble whether to structure the results into a JSON format or return the raw HTML.

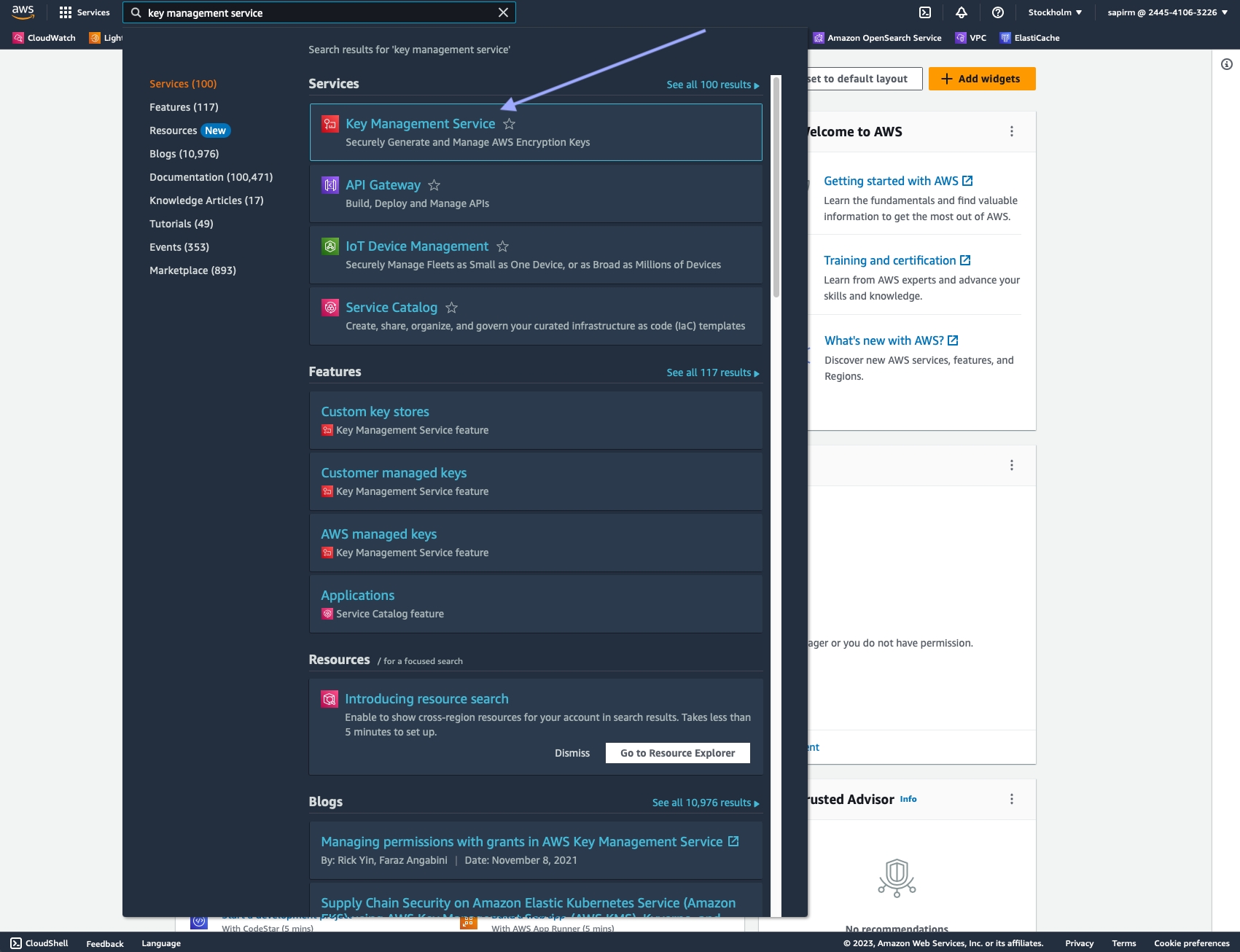

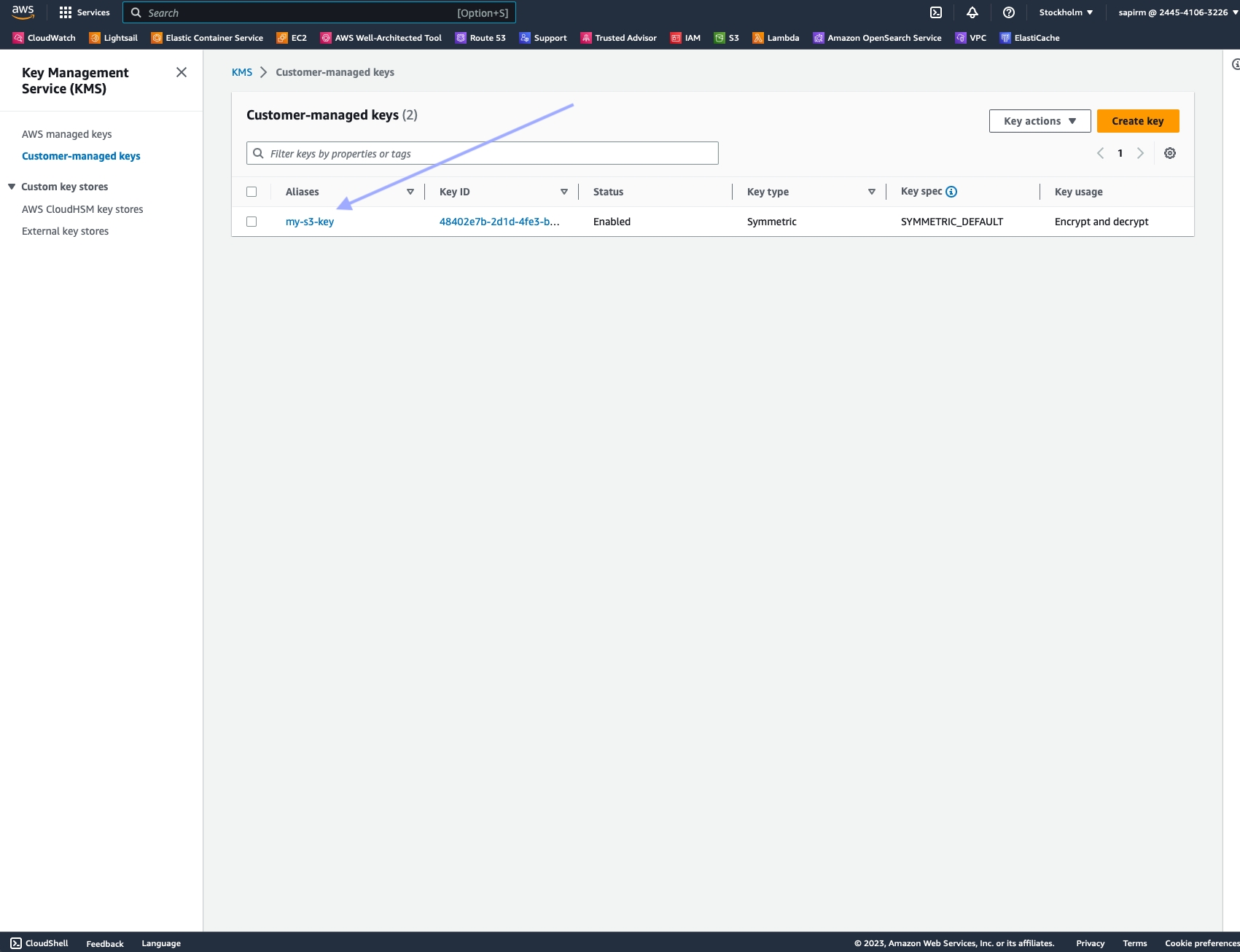

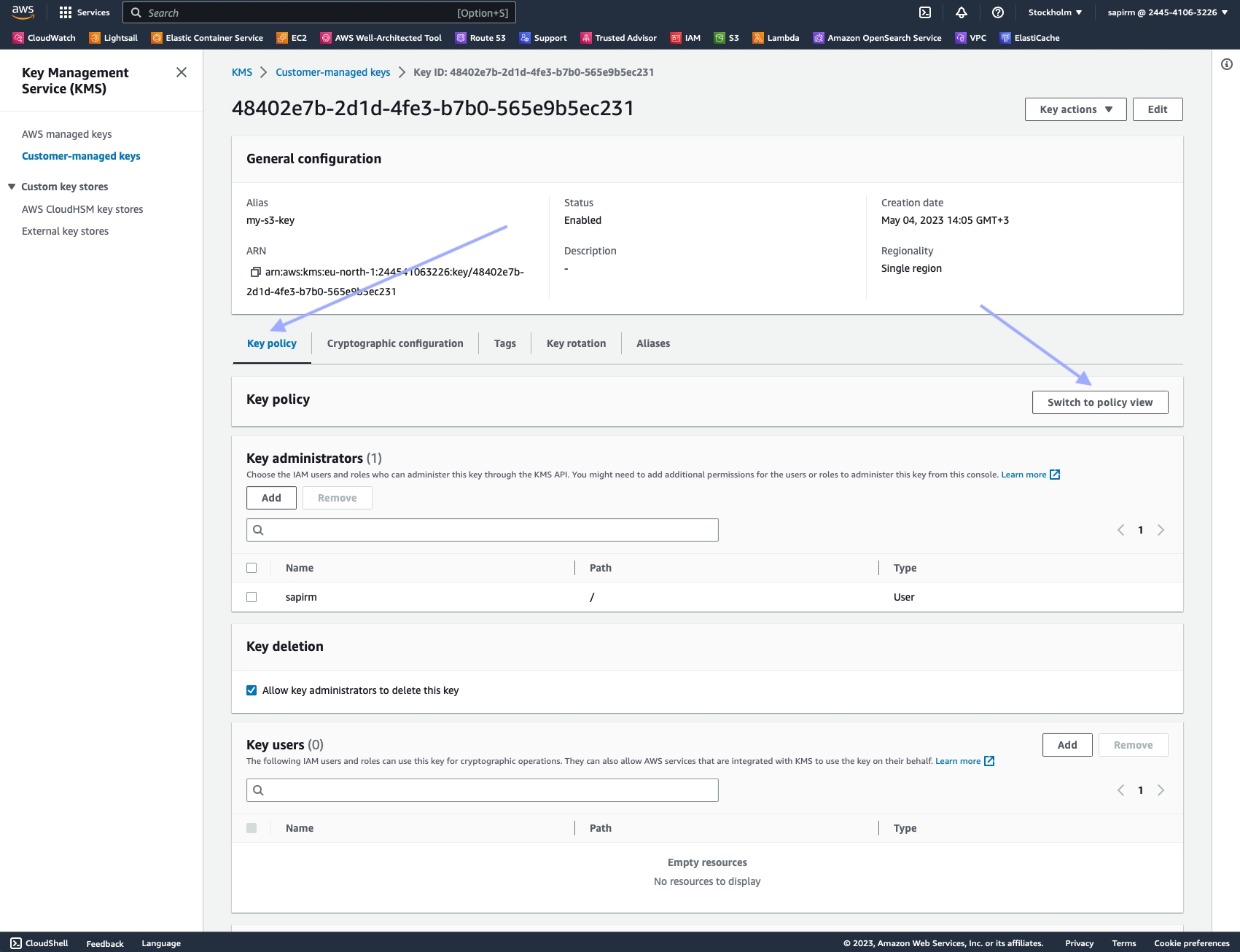

storage_type

Optional

ENUM: s3 | gs Use s3 for Amazon S3 and gs for Google Cloud Platform. Leave blank to enable Push/Pull delivery.

storage_url

Optional

Repository URL: s3://Your.Bucket.Name/your/object/name/prefix/ Leave blank to enable Push/Pull delivery.

callback_url

Optional

A url to callback once the data is delivered. The E-commerce API will send a POST request to the

callback_url with the task details once the task is complete (this “notification” will not include the requested data).

storage_compress

Optional (default = false)

When set to true, the response saved to the storage_url will be compressed using GZIP format. This can help reduce storage size and improve data transfer efficiency. If not set or set to false, the response will be saved in its original uncompressed format.

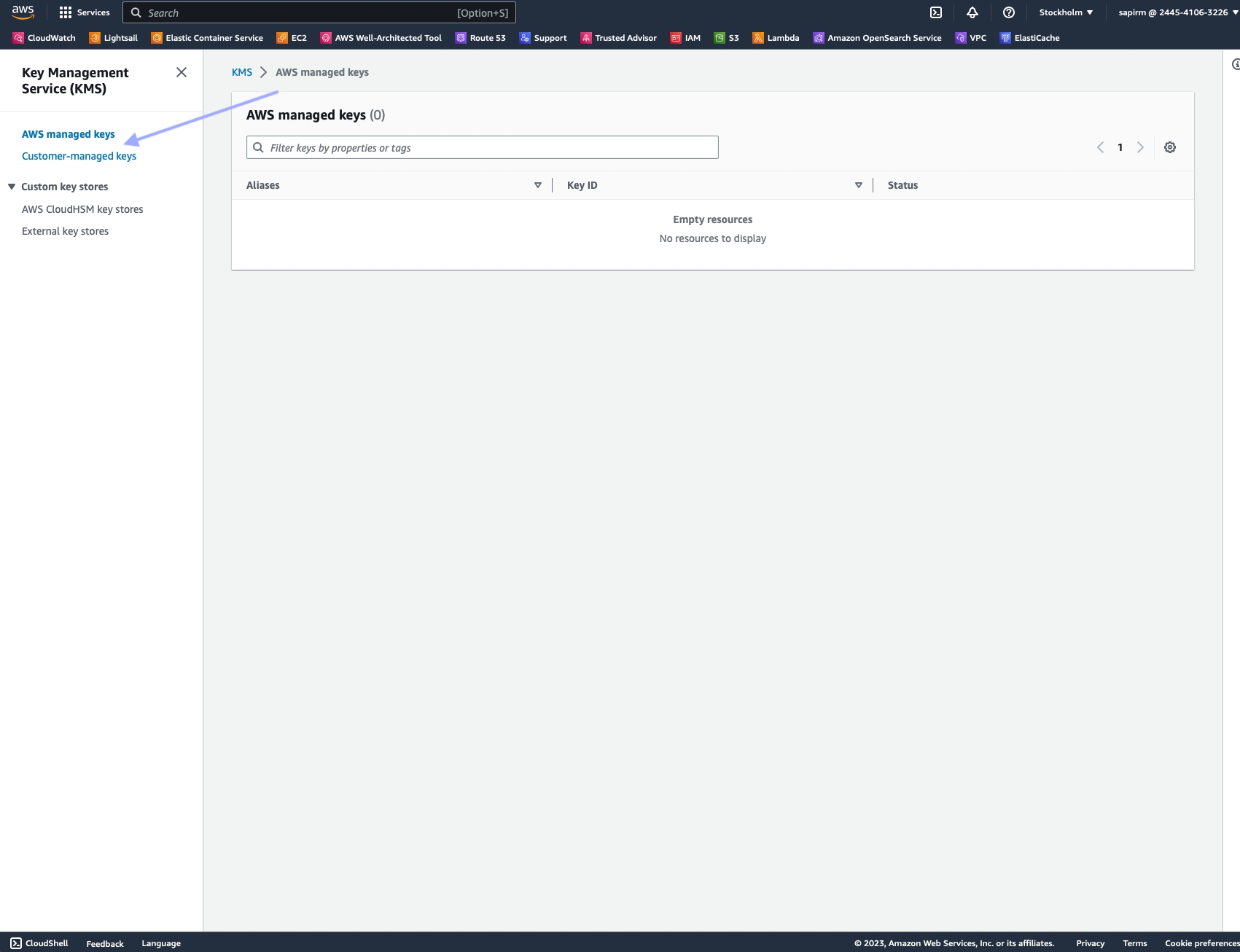

Please add Nimble's system/service user to your GCS or S3 bucket to ensure that data can be delivered successfully.

Response

Initial Response

Batch requests operate asynchronously, and treat each request as a separate task. The result of each task is stored in a file, and a notification is sent to the provided callback any time an individual task is completed.

Checking batch progress and status

POST https://api.webit.live/api/v1/batches/<batch_id>/progress

Like asynchronous tasks, the status of a batch is available for 24 hours.

Response

The progress of a batch is reported in percentages.

Once a batch is finished, its progress will be reported as “1”.

Retrieving Batch Summary

Once a batch is finished, it’s possible to return a summary of the completed tasks by using the following endpoint:

GET https://api.webit.live/api/v1/batches/<batch_id>

For example:

The response object lists the status of the overall batch, as well as the individual tasks and their details:

Response

500 Error

400 Input Error

Response codes

200

OK

400

The requested resource could not be reached

401

Unauthorized/invalid credental string

500

Internal service error

501

An error was encountered by the proxy service

Last updated